Abstract.

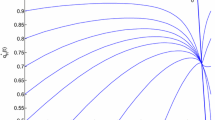

This paper presents a relationship between evolutionary game dynamics and distributed recency-weighted Monte Carlo learning. After reviewing some existing theories of replicator dynamics and agent-based Monte Carlo learning, we provide proofs of the formulation-level equivalence between these two models. The relationship will be revealed not only from a theoretical viewpoint, but also by computational simulations of the models. As a consequence, macro dynamic patterns generated by distributed micro-decisions can be explained by parameters defined at an individual level. In particular, given the equivalent formulations, we investigate how the rate of agents’ recency weighting in learning affects the emergent evolutionary game dynamic patterns. An increase in this rate negatively affects the inertia, making the evolutionary stability condition more strict, and positively affecting the evolutionary speed toward equilibrium.

Similar content being viewed by others

Author information

Authors and Affiliations

Additional information

JEL Classification:

C63, C73

Supervisions and advice given by Arthur J. Caplan have greatly contributed to this paper. I am also grateful to the anonymous reviewers for their valuable comments redirecting my presentation to a more appropriate one.

Rights and permissions

About this article

Cite this article

Sasaki, Y. Evolutionary game dynamics and distributed recency-weighted learning. J Evol Econ 15, 365–391 (2005). https://doi.org/10.1007/s00191-005-0254-z

Issue Date:

DOI: https://doi.org/10.1007/s00191-005-0254-z