Abstract

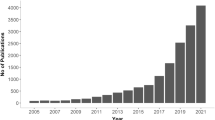

The goal of this paper is to describe the mechanism of the public perception of risk of artificial intelligence. For that we apply the social amplification of risk framework to the public perception of artificial intelligence using data collected from Twitter from 2007 to 2018. We analyzed when and how there appeared a significant representation of the association between risk and artificial intelligence in the public awareness of artificial intelligence. A significant finding is that the image of the risk of AI is mostly associated with existential risks that became popular after the fourth quarter of 2014. The source of that was the public positioning of experts who happen to be the real movers of the risk perception of AI so far instead of actual disasters. We analyze here how this kind of risk was amplified, its secondary effects, what are the varieties of risk unrelated to existential risk, and what is the dynamics of the experts in addressing their concerns to the audience of lay people.

Similar content being viewed by others

Notes

As we saw in the introduction, existential risks are events that threaten “to cause the extinction of Earth-originating intelligent life” (Bostrom 2002, p. 381).

A further study to show how the life cycle of this critical moment of communication of this risk perception propagated would need us to do a network analysis reconstructing the spread.

See Levy (2007). Sex bots are taken as risk for some group of people for different reasons. On the one hand, there are feminists’ groups that argues that sex bots will just enhance the gender discrimination and stereotypes. On the other hand, there are conservatives’ religious groups that are by definition against the hedonism.

The propensity to take corresponding actions may lead to behavioral patterns, which generate secondary social or economic consequences that extend far beyond direct harm to humans or the environment, including significant indirect impacts such as liability, insurance costs or loss of trust in institutions (Kasperson et al. 1988). The consequences can also be good or neutral, with the foundation of new companies and institutes, as in the case of AI. Such secondary effects often trigger demands for additional institutional responses and protective actions (like the Whitehouse Policy initiative), or conversely (in the case of risk attenuation), place impediments in the path of needed protective actions. An interesting contribution for further studies would be the analysis of how media institutions (profiles and websites) frame the risk perception of AI, how and why layman amplifies specifics risk. In this sense, the research on risk perception may benefit from further developments on the role that experts play not only in the assessment of risk, but also their communication to the public, and sometimes how they shape or formulate the risk.

References

Baert P, Morgan M (2017) A performative framework for the study of intellectuals. Eur J Soc Theory. https://doi.org/10.1177/1368431017690737

Beck U (1986) Risikogesellschaft: auf dem Weg in eine andere Moderne. Suhrkamp, Frankfurt am Main

Beck U (1999) World risk society. Polity, Malden

Beck U (2007) Weltrisikogesellschaft: Auf der Suche nach der verlorenen Sicher- heit. Suhrkamp, Frankfurt am Main

Benthin A, Slovic P, Moran P, Severson H, Mertz CK, Gerrard M (1995) Adolescent health-threatening and health-enhancing behaviors: a study of word association and imagery. J Adolesc Health 17:143–152

Binder A (2012) Figuring out #Fukushima: an initial look at functions and content of us twitter commentary about nuclear risk. Environ Commun J Nature Culture 6(2):268–277

Bostrom N (2002) Existential risks: analyzing human extinction scenarios and related hazards. J Evol Technol 9

Bostrom N, Ćirković MM (2008) Global catastrophic risks. Oxford University Press

Bostrom N (2014) Superintelligence: paths, dangers, strategies. Oxford University Press, Oxford

Brynjolfsson E, McAfee A (2014) The second machine age. W.W.Norton & Company, New York

Cellan-Jones R (2014) Stephen Hawking wars Artificial Intelligence could end mankind. BBC Technology. https://www.bbc.co.uk/news/technology-30290540. Accessed 2 Dec 2014

CFTC and SEC (Commodity Futures Trading Commission and Securities and Exchange Commission) (2010). Findings Regarding the Market Events of May 6, 2010: Report of the Staffs of the CFTC and SEC to the Joint Advisory Committee on Emerging Regulatory Issues. Washington, DC

Chong M, Choy M (2018) The social amplification of haze-related risks on the internet. Health Commun 33(1):14–21. https://doi.org/10.1080/10410236.2016.1242031

Chung IJ (2011) Social amplification of risk in the internet environment. Risk Anal 31(12):1883–1896

Douglas M (1985) Risk acceptability according to the social sciences. Routledge & Paul Kegan, London

Douglas M (1986) How Institutions Think. Syracuse University Press, Syracuse, NY

Douglas M (1990) Risk as a forensic resource. Daedalus 119(4):1–16

Douglas M (1992) Risk and blame: essays in cultural theory. Routledge, London; New York

Farrell M (2012) Knight’s bizarre trades rattle markets. CNN. http://buzz.money.cnn.com/2012/08/01/trading-glitch/. Accessed 1 Aug 2012

Fellenor J, Barnett J, Potter C, Urquhart J, Mumford JD, Quine CP (2018) The social amplification of risk on Twitter: the case of ash dieback disease in the United Kingdom. J Risk Res 21(10):1163–1183. https://doi.org/10.1080/13669877.2017.1281339

Freudenburg WR (1988) Perceived risk, real risk: social science and the art of probabilistic risk assessment. Science 242:44–49

Frey C, Osborne M (2013) The future of employment: how susceptible are jobs to computerisation? Technical Report, Oxford Martin School, University of Oxford, Oxford, UK

Foucault M (1978) Governmentality. Ideol Conscious 6:5–12

Foucault M (1980) Power/knowledge: collected interviews and other essays 1971–1977. Harvester Press, Brighton

Foucault M (1982) The subject and power. Crit Inq 8:777–795

Foucault M (1991) Governmentality. In: Burchell G, Gordon C, Miller P (eds) The foucault effect: studies in governmentality. Harvester Wheatsheaf, London, pp 87–104

Glaeser E (2014) Secular joblessness. In: Teulings C, Baldwin R (eds) Secular stagnation: facts, causes, and cures. Centre for Economic Policy Research (CEPR), London, pp 69–82

Good IJ (1965) Speculations concerning the first ultraintelligent machine. Adv Comput 6:31–88. https://doi.org/10.1016/S0065-2458(08)60418-0

HC—House of Commons (2016) Robotics and artificial intelligence. https://publications.parliament.uk/pa/cm201617/cmselect/cmsctech/145/14502.htm. Accessed 8 June 2018

HL—House of Lords (2018). AI in the UK: ready, willing and able? https://www.parliament.uk/business/committees/committees-a-z/lords-select/ai-committee/news-parliament-2017/ai-report-published/. Accessed 4 May 2018

Kahneman D, Slovic P, Tversky A (1982) Judgment under uncertainty: heuristics and biases. Cambridge University Press, New York

Kasperson R (1992) The social amplification of risk: progress in developing an integrative framework of risk. In: Krimsky S, Golding D (eds) Social theories of risk. Praeger, Westport, p 153

Kasperson R, Renn O, Slovic P, Brown HS, Emel J, Goble R, Kasperson J, Ratick S (1988) The social amplification of risk: a conceptual framework. Risk Anal 8(2):177–187

Kasperson J, Kasperson R, Pidgeon N, Slovic P (2003) The social amplification of risk: assessing fifteen years of research and theory. In: Pidgeon N, Kasperson R, Slovic P (eds) The social amplification of risk. Cambridge University Press, New York, pp 13–46

Kolodny C (2014) Stephen Hawking is terrified of artificial intelligence. Huffington Post. https://www.huffingtonpost.co.uk/entry/stephen-hawking-artificial-intelligence_n_5267481. Accessed 5 May 2014

Lanier J (2014) The myth of AI: a conversation with Jaron Lanier. Edge.org. https://www.edge.org/conversation/the-myth-of-ai#26015. Accessed 14 Nov 2014

Levy D (2007) Love and sex with robots: the evolution of human-robot relationships. Harper/HarperCollins Publishers, New York

Li N et al (2016) Tweeting disaster: an analysis of online discourse about nuclear power in the wake of the Fukushima Daiichi nuclear accident. J Sci Commun 15(05):A02

Luhmann N (1993) Risk: a sociological theory. A. de Gruyter, New York

Lyng S (1990) Edgework: a social psychological analysis of voluntary risk taking. Am J Sociol 95:851–886

Mokyr J (2014) Secular stagnation? Not in your life. In: Teulings C, Baldwin R (eds) Secular stagnation: facts, causes and cures. Centre for Economic Policy Research (CEPR), London, p 83

NSTC—Executive Office of the President National Science and Technology Council (2016) National Science and Technology Council Committee on Technology. Preparing for the future of artificial intelligence. https://obamawhitehouse.archives.gov/sites/default/files/whitehouse_files/microsites/ostp/NSTC/preparing_for_the_future_of_ai.pdf

Pidgeon N, Kasperson R, Slovic P (2003) The social amplification of risk. Cambridge University Press, New York

Renn O (1991) Risk communication and the social amplification of risk communicating risks to the public. Springer, Berlin/New York, pp 287–324

Renn O, Burns W, Kasperson J, Kasperson R, Slovic P (1992) The social amplification of risk: theoretical foundations and empirical applications. J Soc Issues 48(4):137–160

Research Center and the Ohio Aerospace Institute, 30–31 March 1993. http://www.rohan.sdsu.edu/faculiy/vmge/misc/singularity.html

Russell S (2015a) Ban Lethal Autonomous Weapons. The Boston Globe 08 09

Russell S (2015b) Take a stand on AI weapons. Nature 521(7553):415–416

Salvadori L, Savio S, Nicotra E, Rumiati R, Finucane M, Slovic P (2004) Expert and public perception of risk from biotechnology. Risk Anal 24:1289–1299

Simon HA (1956) Rational choice and the structure of the environment. Psychol Rev 63:129–138

Sloan L, Morgan J, Housley W, Williams M, Edwards A, Burnap P, Rana O (2013) Knowing the Tweeters: deriving sociologically relevant demographics from Twitter. Sociol Res Online 18:7. https://doi.org/10.5153/sro.3001

Slovic P (1986) Informing and educating the public about risk. Risk Anal 6(4):403–415

Slovic P (2000) The perception of risk. Earthscan, London

Slovic P, Kunreuther H, White G (2000) Decision process, rationality and adjustment to natural hazards. In: Slovic P (ed) The perception of risk. Earthscan, New York

Slovic P, Finucane M, Peters E, MacGregor D (2004) Risk as analysis and risk as feelings: some thoughts about affect, reason, risk, and rationality. Risk Anal 24(2):2004

Slovic P, Finucane M, Peters E, MacGregor D (2006) The affect heuristics. Eur J Oper Res 177(2007):1333–1352

Statista (2018) Number of monthly active Twitter users”. https://www.statista.com/statistics/282087/number-of-monthly-active-twitter-users/

Stefanik E (2018) 115th Congress 2nd Session. H.R. 5356 to establish the National Security Commission on Artificial Intelligence. https://www.congress.gov/bill/115th-congress/house-bill/5356

Stilgoe J (2008) Machine learning, social learning and the governance of self-driving cars. Soc Stud Sci 48(1):25–56. https://doi.org/10.1177/0306312717741687

Strekalova YA, Krieger JL (2017) Beyond words: Amplification of cancer risk communication on social media. Journal of Health Communication 22(10):849–857. https://doi.org/10.1080/10810730.2017.1367336

Tversky A, Kahneman D (1974) Judgment under uncertainty: heuristics and biases. Science 185:1124–1131

Vinge V (1993) The coming technological singularity: how to survive in the post-human era. Presented at the VISION-21 Symposium sponsored by NASA Lewis

Witrz C et al (2018) Rethinking social amplification of risk: social media and Zika in three languages. Risk Anal 38(12)

Wolcholver N (2015) Concerns of an Artificial Intelligence Pioneer. Quanta Magazine. Publicado em 21 de Abril de 2015. https://www.quantamagazine.org/artificial-intelligence-aligned-with-human-values-qa-with-stuart-russell-20150421/

Nilsson N (2010) The quest for artificial intelligence: a history of ideas and achievements. s.l.:web version

Zajonc RB (1980) Feeling and thinking: preferences need no inferences. Am Psychol 35:151–175

Zhang L, Xu L, Zhang W (2017) Social media as amplification station: factors that influence the speed of on-line public response to health emergencies. Asian J Commun 27(3):322–338. https://doi.org/10.1080/01292986.2017.1290124

Zhou M, Wang M, Zhang J (2017) How are risks gener- ated, developed and amplified? Case study of the crowd col- lapse at Shanghai Bund on 31 December 2014. Int J Disaster Risk Reduct 24(Supplement C):209–215. https://doi.org/10.1016/j.ijdrr.2017.06.013

Funding

This research was funded by the São Paulo Foundation (Fapesp) grants 2018/09681-4 and 2019/07665-4 and Brazilian National Council for Scientific and Technological Development (CNPq) grant 312180/2018-7.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Neri, H., Cozman, F. The role of experts in the public perception of risk of artificial intelligence. AI & Soc 35, 663–673 (2020). https://doi.org/10.1007/s00146-019-00924-9

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00146-019-00924-9