Abstract

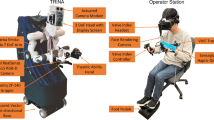

This paper introduces a cost-efficient immersive teleconference system. Especially to enhance immersive interaction capability during one-to-many telecommunication, this paper concentrates on the design of a teleconference system that is composed of a set of robotic devices and web-based control/monitoring interfaces for human-robot-avatar interaction. To this end, we first propose a serverclient network model for human-machine interaction systems based on the latest HTML5 technology. As a hardware system for teleconferencing, the simplest robot is designed specially for remote users to be able to experience augmented reality naturally on live scenes. Modularized software systems are then explained in view of accessibility and functionality. This paper also describes how a human head motion is captured, synchronized with a robot in the real world, and rendered through an 3-D avatar in the augmented world for human-robot-avatar interaction. Finally, the proposed system is evaluated through a questionnaire survey that follows a series of user-experience tests.

Similar content being viewed by others

References

J. Cooperstock, “Multimodal telepresence systems,” IEEE Signal Processing Magazine, vol. 28, no. 1, pp. 77–86, 2011.

J. Edwards, “Telepresence: virtual reality in the real world [special reports],” IEEE Signal Processing Magazine, vol. 28, no. 6, pp. 9–142, 2011.

J. Apostolopoulos, P. Chou, B. Culbertson, T. Kalker, M. Trott, and S. Wee, “The road to immersive communication,” Proc. of the IEEE, vol. 100, no. 4, pp. 974–990, 2012.

E. Guizzo, “When my avatar went to work,” IEEE Spectrum, vol. 47, no. 9, pp. 26–50, 2010.

VGo Communications. [Online]. Available: http://vgocom.com/, 2012.

K. Tsui, A. Norton, D. Brooks, H. Yanco, and D. Kontak, “Designing telepresence robot systems for use by people with special needs,” Proc. of International Symposium on Quality of Life Technologies, 2011.

Mantarobot Telepresence Robot. [Online]. Available: http://www.mantarobot.com/, 2012.

S. Adalgeirsson and C. Breazeal, “Mebot: a robotic platform for socially embodied presence,” Proc. of the 5th ACM/IEEE international conference on Human-Robot Interaction, pp. 15–22, 2010.

C. Cruz-Neira, D. Sandin, T. DeFanti, R. Kenyon, and J. Hart, “The cave: audio visual experience au tomatic virtual environment,” Communications of the ACM, vol. 35, no. 6, pp. 64–72, 1992.

B. Sajadi and A. Majumder, “Autocalibration of multiprojector CAVE-like immersive environments,” IEEE Trans. on Visualization and Computer Graphics, vol. 18, no. 3, pp. 381–393, 2012.

K. Petkov, C. Papadopoulos, M. Zhang, A. Kaufman, and X. Gu, “Interactive visibility retargeting in VR using conformal visualization,” IEEE Trans. on Visualization and Computer Graphics, vol. 18, no. 7, pp. 1027–1040, 2012.

R. McMahan, D. Bowman, D. Zielinski, and R. Brady, “Evaluating display fidelity and interaction fidelity in a virtual reality game,” IEEE Trans. on Visualization and Computer Graphics, vol. 18, no. 4, pp. 626–633, 2012.

A. Jones, M. Lang, G. Fyffe, X. Yu, J. Busch, I. Mc-Dowall, M. Bolas, and P. Debevec, “Achieving eye contact in a one-to-many 3D video teleconferencing system,” ACM Trans. on Graphics, vol. 28, no. 3, pp. 64:1–64:8, 2009.

A. Maimone and H. Fuchs, “Encumbrance-free telepresence system with real-time 3D capture and display using commodity depth cameras,” Proc. of the 10th IEEE International Symposium on Mixed and Augmented Reality, pp. 137–146, 2011.

Z. Zhang, “Microsoft Kinect sensor and its effect,” IEEE Multimedia, vol. 19, no. 2, pp. 4–10, 2012.

A. F. Villaverde, C. Raimúndez, and A. Barreiro, “Passive internet-based crane teleoperation with haptic aids,” International Journal of Control, Automation and Systems, vol. 10, no. 1, pp. 78–87, 2012.

B. Wang, Z. Li, W. Ye, and Q. Xie, “Development of human-machine interface for teleoperation of a mobile manipulator,” International Journal of Control, Automation and Systems, vol. 10, no. 6, pp. 1225–1231, 2012.

Y.-W. Nam, T.-J. Jang, and M. A. Srinivasan, “Haptic texture generation using stochastic models and teleoperation,” International Journal of Control, Automation and Systems, vol. 10, no. 6, pp. 1245–1253, 2012.

L. Riek and R. Watson, “The age of avatar realism,” IEEE Robotics & Automation Magazine, vol. 17, no. 4, pp. 37–42, 2010.

jWebSocket, the open source solution for realtime web developers. [Online]. Available: http://jwebsocket.org/, 2012.

JSEP:Javascript Session Establishment Protocol. [Online]. Available: http://tools.ietf.org/html/draftietf-rtcweb-jsep-00, 2012.

Author information

Authors and Affiliations

Corresponding authors

Additional information

Recommended by Editorial Board member Shinsuk Park under the direction of Editor Hyouk Ryeol Choi.

This work was supported by the Global Frontier R&D Program on <Human-centered Interaction for Coexistence> funded by the National Research Foundation of Korea grant funded by the Korean Government(MSIP) (NRF-2012M3A6A3057162).

Heon-Hui Kim received his B.S. degree in Marine System Engineering and his M.S. and Ph.D. degrees in Control and Instrumentation Engineering from Korea Maritime University, Busan, Korea, in 1997, 2002, and 2012, respectively. During 2006-2009, he was a researcher with the Human-friendly Welfare Robot System Engineering Research Center, Korea Advanced Institute of Science and Technology, Daejeon, Korea. Since 2009, he has been a researcher with the Art and Robotics Institute, Kwangwoon University, Seoul, Korea. His current research interests include pattern recognition, robot vision, robot software, and marine robotics.

Jung-Su Park received his B.S. degree in Computer Science and Engineering from Dongguk University, Seoul, Korea, in 2011. He is currently an M.S. candidate student at the Department of Computer Science and Engineering, Dongguk University. His current research interests include human-robot interaction and mobile robot navigation with emphasis on intelligent sensor fusion.

Jin-Woo Jung received his B.S. and M.S. degrees in Electrical Engineering from Korea Advanced Institute of Science and Technology (KAIST), Korea, in 1997 and 1999, respectively and received his Ph.D. degree in Electrical Engineering and Computer Science from KAIST, Korea in 2004. Since 2006, he has been with the Department of Computer Science and Engineering at Dongguk University, where he is currently an Associate Professor. During 2001–2002, he worked as visiting researcher at the Department of Mechano-Informatics, University of Tokyo. During 2004–2006, he worked as researcher in Human-friendly Welfare Robot System Research Center at KAIST, Korea. His current research interests include human behavior recognition with emphasis on biometric application, multiple robot cooperation and intelligent human-robot interaction.

Kwang-Hyun Park received his B.S., M.S., and Ph.D. degrees in electrical engineering and computer science from the Korea Advanced Institute of Science and Technology (KAIST), Daejeon, Korea, in 1994, 1997, and 2001, respectively. During 2001–2003, he was a Research Associate with the Human-Friendly Welfare Robot System Engineering Research Center, KAIST. He was a Research Professor with the Department of Electrical Engineering and Computer Science, KAIST, from 2003 to 2004 and a Visiting Researcher with the College of Computing, Georgia Institute of Technology, Atlanta, USA, from 2004 to 2005. He was also a Visiting Professor with the Department of Electrical Engineering and Computer Science, KAIST, from 2005 to 2008 and is currently an Associate Professor with the School of Robotics, Kwangwoon University, Seoul, Korea. His current research interests include intelligent service robot, human-robot interaction and pattern recognition.

Rights and permissions

About this article

Cite this article

Kim, HH., Park, JS., Jung, JW. et al. Immersive teleconference system based on human-robot-avatar interaction using head-tracking devices. Int. J. Control Autom. Syst. 11, 1028–1037 (2013). https://doi.org/10.1007/s12555-012-0431-4

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s12555-012-0431-4